In the previous article we got to grips with the fundamental behaviour of qubits by reviewing the historical account of regular bits. Qubits and Bits share a fundamental property: they are both two-state systems and the previous article focused on this, explaining that the bright minds of centuries past had figured out how to perform mathematical operations on pretty much any physical thing with two states.

A tremendous achievement.

But qubits are also two-state systems, so in exactly the same way for bits, they also store data and we can perform operations on them. The difference is that they also exhibit quantum properties.

But what is a quantum property? And how do we express them on paper?

Describing a quantum property requires the use of

Qubits Require Complex Numbers

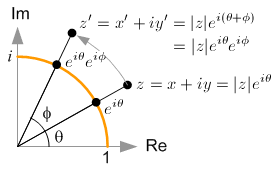

Let’s think about why we need complex numbers to describe qubits. Essentially it boils down to the fact that rotation in the Euclidean plane using real numbers reduces to simple multiplication using complex numbers.

Multiplication is much, much easier to do than trigonometry, so the equations of quantum mechanics look and feel much simpler using complex numbers.

If you are not comfortable with complex numbers, fear not, I will try to reference them sparingly in what follows. If anything, just imagine complex numbers as pairs of real numbers that might describe a point on a map (e.g. latitude and longitude are both real numbers, the pair together describes a point on the Earth. That point is a complex number). In this example though, the longitude is the real part of the complex number while the latitude

is the imaginary part – but these are simply labels, but it helps to be able to write down a complex number

as a coordinate (or ordered pair):

where that plus sign is vector addition and which is not a real number but is often referred to as an imaginary number.

Complex numbers are what you get if you extend the real line above and below to infinity.

Complex numbers hold more information than real numbers. This is important, and is often referred to as an object’s degrees of freedom.

For example, a complex number has an extra property called phase denoted for its alternative name argument. When the phase of a complex number is zero or any multiple of

radians then it has no imaginary component (because

will always be zero, and hence

will always reduce to . And this complex number becomes real.

So, complex numbers have an additional degree of freedom, or as I like to say:

Complex numbers (over real numbers) have extra pockets you can put things in…

If you look at the very definition of a complex number on wikipedia you can see that it is just

where and

are real numbers. Thus, a complex number

is just a linear combination of real numbers given in terms of the basis

.

Now, look at the wikipedia definition of a pure qubit state:

See the similarity!?

If qubits are necessarily linear combinations of some basis states , then, by definition, we already have a mathematical object that looks exactly like that! The complex number. So we might as well use them!

Wait…shouldn’t there be four degrees of freedom? After all, each complex number and

has two degrees of freedom each, so surely their sum has four?

Well, no. Quantum Theory is also a theory of probability. Which means we have a this axiom which forces us to constraint our system with the following relation:

which is called the normalisation constraint, and it removes a degree of freedom. In other words, you can write both and

as a system of equations that depend on just 3 variables, namely

like so:

…the so-called Hopf coordinates.

OK, but that’s still three. How do we get to two?

Well, we have that global phase component of the qubit: , which has no physical observable consequences. So we just ignore it, leaving

and this only has 2 variables, namely , and

is the physically significant quantity known as the relative phase.

Since a qubit has 2 degrees of freedom, we can describe it using two coordinates in 2-dimensional space (a plane), hence the usefulness of using the Bloch Sphere to represent it:

But there is more to qubits than just looking like complex numbers…

Qubits Require Matrices

The reason why we need matrices is because of quantum logic gates and the fact that gates always have matrix representations. Furthermore, all binary Truth Tables (which we looked at in the previous article) can be expressed in the form of a square matrix known as a permutation matrix, putting even further emphasis on the need for using matrices.

So how do we go from

to a matrix?

Well, easy. We just write those basis states as vectors:

as

Storing Binary Data Using Logic Gates

Recall from the first article the logic gates of classical bits. For convenience, they are:

- NOT

- AND & NAND

- OR & NOR

- XOR & XNOR

Using these logic gates one can store binary data. How? By building a flip-flop.

Take a pair of NOR logic gates and cross-couple them, now you’ve got yourself a so-called set-reset latch which can store either a 0 or a 1 on a bit indefinitely – which is why, sometimes, flip-flops are called bi-stable circuits: which are circuits that have no charge or discharge time due to the absence of capacitors.

There are other flip-flops too, for example:

- Set-Reset NOR Latch

- Complement Set-Reset NAND Latch

- Set-Reset AND-OR Latch

- JK (Toggle) Latch

and there’s more.

If you arrange multiple flip-flops in parallel and you’ve got yourself a register. When a system is capable of performing flip-flops we say that the system has memory.

The point is: logic gates can store binary data – and the question is:

Can qubits store data?

Yes.

But we need qubit gates.

Gates for Qubits

Logic gates for qubits are unitary matrices.

Why?

Because for any time interval over which we are trying to realise a particular transformation (i.e. perform an experiment, or just crunch some numbers) we must use the Schrödinger equation (why? because we are working in quantum theory). This equation, necessarily involves Hamiltonians which, are required to be Hermitian (or self-adjoint) to yield real-world results. This condition of Hermiticity implies that the transformation is unitary.

Because there are more degrees of freedom in a qubit (namely 2, instead of 1 for a classical bit) a quantum logic gate is not only a unitary matrix, it is also a square matrix of size . Right, they’re massive. But that have to be huge because qubits hold a lot of information, so each piece of information in a qubit must get acted on by this matrix.

When you act on a -dimensional vector (a qubit) by a

-dimensional matrix (a qgate) you get a

-dimensional vector back (thanks linear algebra). The entries of this vector are called the amplitudes, and they are represented by greek letters, say

. Here is an example of an output vector after begin operated on by a quantum logic gate:

But this can always be represented in terms of the standard basis:

The Linear Shift of the QFT

We have used the Quantum Fourier Transform (QFT) before in this article here. Let’s now see the raw power of the qubit by performing a QFT on it.

In dimension, a qubit is an

-dimensional column vector:

Then linear algebra states that the matrix multiplication of a matrix and a column vector

is another column vector whose entries are given by

The QFT is a quantum gate, thus, it is a unitary matrix.

We can apply the unitary QFT on it via matrix multiplication on the basis representation:

Let’s do the first multiplication:

Summary

A computer contains a bunch of physical components, such as gears and cams in mechanical computers, relays and switches in classical computers, and transistors in modern computers. In the mechanical computer the physical state is the rotational orientation of the gears, in the classical computer it is the binary on or off state of the relay, and in the modern computer it is the current and voltage in the transistor.

Physical interactions between these components, causing them to change their state, achieves computation. In the modern computer, data (voltages and current) from memory circuits is transferred to the central processing unit (CPU) where they interact with logic gates (such as AND and OR) implemented in series and parallel, producing new voltages and new currents (a.k.a. the output) which can then be stored back in memory circuits and, ultimately, read (or measured) by the user.

References

[1] https://courses.edx.org/c4x/BerkeleyX/CS191x/asset/chap5.pdf

[2] Nielsen, N. & Chuang, I. L. – Quantum Computation and Quantum Information, Cambridge University Press, (2010).

I just discovered your blog, it seems great, I am checking articles…

But, in this one, just before the summary, in the “Let’s do the first multiplication”, I get a “Formula does not parse” in the latex formula… could you fix it, please?