I’m often asked: “Why are qubits so weird?“.

The answer is that they are not so much weird as they are different. And I would go as far as saying that they are actual simpler than you might expect.

The classical bit was invented first, so naturally, it seems more normal to us. Born in 1948 when Claude E. Shannon first used the word bit in his seminal paper “A Mathematical Theory of Communication” (Bell System Technical Journal, Vol. 27, pp. 379-423), the bit of information has since become a household name, commonly referred to when talking about how much memory a computer system has.

These computer systems essentially store these bits, more precisely they store their states (a 1 or a 0) in to complicated electronic circuits. This is done using electrical currents and voltages to manipulate and retrieve those 1’s and 0’s in order to provide a perfectly logical answer to some set of instructions that the user of the computer wishes to evaluate (there’s a theory that says this works).

So why is this wrong?

The answer is that it is wrong with respect to the way in which Nature approaches the same problem.

See, we developed computer systems in this way back in the 1930’s and 1940’s simply because we didn’t know how to build a qubit.

A qubit is much more natural an object than a bit, and it took a long, long time before we had the capability to build one. The first computer that had any sort of quantum-like behaviour wasn’t producing results until 1998 – and that was a nuclear magnetic resonance device operating the equivalent of a 2-qubit processor. This was just enough to solve only the most simplest of quantum algorithms. It wasn’t until 2010 before the first electronic qubit was developed; and then 2015 before we had enough qubits to simulate a Hydogen atom (the simplest atom in the universe).

These days (mid-2019), IBM, Intel and Google have quantum processors with about 50 qubits which can hold their information for about 1 millisecond. Even I have programmed some basic algortihms on IBM Q Experience 5-qubit machine!

But let’s just say that qubits were 50 years late to the party.

That’s why, in the interim, we all got used to regular old bits, but they’re a hack; we essentially cheated our way to the easiest, simplest method of utilising the natural world to do our logical bidding. It was our archaic attempt to simulate Nature; and it worked, for a while.

Nowadays we find it very difficult to extract any more performance out of our regular old bits, save for simply brute-forcing it by building huge numbers of parallel devices. Even then, we lack the ability to simulate Nature with any sort of complexity; and I mean really simulate it, not just estimate it.

So why do our classical computers fail so hard at simulating reality?

A buzzy answer to this would be that classical computers just don’t get quantum mechanics. Reality, if you choose to believe the postulates, is quantum mechanical. To my knowledge, every test we’ve thrown at it passes the quantum test. Despite the fact that we really only sense the macroscopic world, it’s really operating like a big quantum simulator.

Because of this, if we want to simulate reality (and yes, we do, all the time), we need computers which are not totally blind to quantum mechanics.

And so, here we are, at the end of the second decade of the second millenium, and we’ve just figured out how to build a computer that works with quantum mechanics; and it uses qubits.

Weird little things.

Or are they?

Qubits, like bits, hold (Shannon) information. However, unlike bits, they’re somehow able to hold more information. It does this using probability and a quantum effect called superposition.

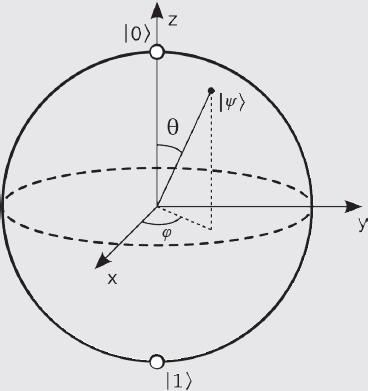

Classical bits can only ever be a 0 or a 1; these are its states. A qubit can also be a 0 or a 1 and, in fact, it is precisely one of these states any time we look at one. The difference is when we are not looking at them. During this time, a qubit can be in a superposition of the 0 and the 1 state. You can’t superpose a classical bit, there’s just no where for that superposition information to go! A qubit has room for this information (that extra room is called an extra degree-of-freedom) so you can superpose them. Thus, the (unobserved) state of a qubit can be in a continuum of possible configurations – that is a lot of information to hold. The act of observing that qubit will then force it to be in a 0 or a 1 state.

Probability helps us here to understand what is going on because if a system has a probabilistic description then it can be in any one of the states allowed by that distribution – but the most likely state will be the mean value of that distribution. Experimenters can sample this distribution to obtain a number and this is akin to making an observation because a sample drawn from a distribution is a single number.

But why should simply looking at a quantum object destroy some of its information?

This was first theorised by the brilliant John von Neumann back in 1932. He proposed that any measurement made by anything is actually a mathematical object called a unitary operator – there are a great many reasons for this but we won’t go in to them in this article. But suffice it to say that measurement, according to him, always produces one number – the measurement result. Therefore if a system was holding more information than what was measured, say other similar results, then all those possible results are lost.

The challenge between now and then was to find a way to represent this mathematically. The maths to describe classical bits is easy and we’ve known how to do boolean algebra for a long, long time – and bits do a great job at obeying those rules. Finding mathematical objects that behave like quantum systems was a lot more difficult to do, and contributed to the overall 50 year lag.

To describe a qubit mathematically we had to expand our field of operation to the complex plane. Here we get an additional degree of freedom to play with. Objects on the complex plane get two properties: magnitude and phase. This additional property allows the maths to cope with the additional information inherent in qubits.

Then we needed to build a framework to allow the manipulation of these objects in the complex plane (and there is a case to investigate what happens if we expand the framework again in to the quaternions – essentially giving qubits a 3-dimensional role – perhaps we’ll call them cubeits? Although, will they be elbow shaped?). That framework is called a C*-algebra and it contain just enough structure to support all the fundamental axioms of quantum mechanics. Finally, we can build a Hilbert space out of all the possible operations we can perform experimentally. One of those operations is the act of measuring, and mathematically, such an operation will destroy information that is not ultimately measured – so says the maths, and so does Nature.

While the last paragraph was a bit technical, the point was that there is indeed a mathematical framework that can describe the strange behaviour of quantum systems. But most importantly: that framework is far, far less restrictive and presumptive than the framework of Boolean Algebra.

So we developed a more general, less structured mathematical theory and we got a better ability to model the complexities of Nature.

Wait, we use less structure and we can model more complex stuff…Sounds strange?

Not really.

And this brings me to the point of this article. We think Nature (and by extension qubits) are more complicated, because the math needed to understand it is more difficult to comprehend. But the only reason for this is because the math we are used to is very, very structured and presumptive – giving the impression of simplicity. But simplicity comes at the price of extra structure.

To give an example: the simple act of measuring the distance between two points on a sheet of Graph paper. Simple, right?

Wrong!

Mathematically that operation of measuring the distance requires the inclusion of about twenty axioms. I’m not joking. That’s an awful lot; and far more than Nature ever abides by.

So we remove the constraints and end up with a more relaxed theory but now the math is more general and abstract. To the untrained eye this looks more complicated, often coming with a new language and set of weird symbols. But, to the trained professional, it is much, much simpler.

And that is what a qubit is: a more abstract version of a classical bit; it may look more complicated but in reality it is much, much simpler.